To write to Kafka directly from Flume sources without additional buffering.To write to Hadoop directly from Kafka without using a source.Use these properties to control the topics and partitions to which events are sent through the Flume source or interceptor.ĬDH includes a Kafka channel to Flume in addition to the existing memory and file channels. Not specified, events are distributed randomly to partitions. Events with the same key are sent to the same partition. If the header contains the key property, that key is used to partition events within the topic. The header contains the topic property, that event is sent to the designated topic, overriding the configured topic. The Kafka sink uses the topic and key properties from the FlumeEvent headers to determine where to send events in Kafka. See the Apache Kafka documentation topic Producer Configs for the full list of Kafka producer properties. Property name with the prefix kafka (for example, ). You can use any producer properties supported by Kafka. Used to configure the Kafka producer used by the Kafka sink. Other properties supported by the Kafka producer To avoid potential loss of data in case of a leader failure, set this to -1. See the Apache Kafka documentation topic Consumer Configs for the full list of Kafka consumer properties. You can use any consumer properties supported by Kafka. Used to configure the Kafka consumer used by the Kafka source. Other properties supported by the Kafka consumer The batch is written when the batchSize limit or batchDurationMillis limit is The maximum time (in ms) before a batch is written to the channel. The maximum number of messages that can be written to a channel in a single batch. Set the same groupID in all sources to indicate that they belong to the same consumer The unique identifier of the Kafka consumer group. Flume supports only one topic per source. The Kafka topic from which this source reads messages.

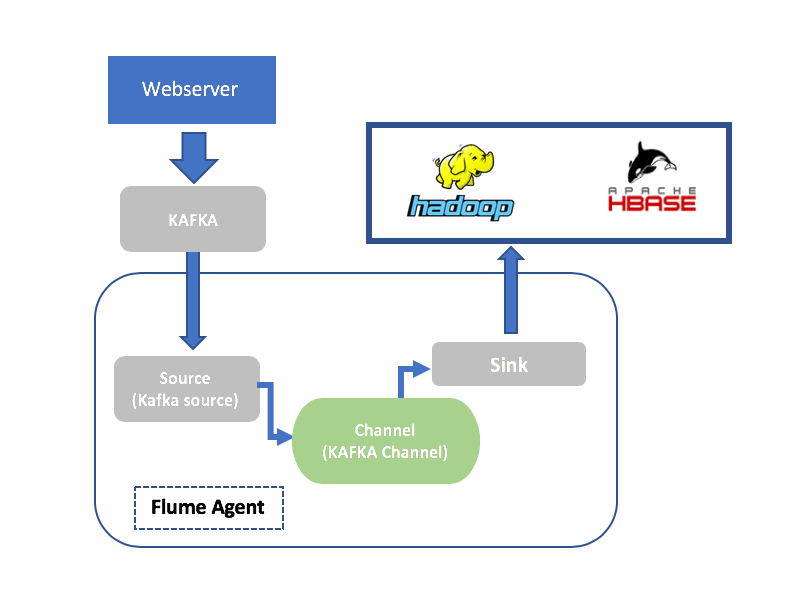

This can be a single host (for example, :2181) or a comma-separated list of hosts in a ZooKeeper quorum (for example, :2181,:2181, The URI of the ZooKeeper server or quorum used by Kafka. The following table describes parameters that the Kafka source supports. If you configure all the sources with the same groupID, and the topic contains multiple partitions,Įach source reads data from a different set of partitions, improving the ingest rate. fileType = DataStreamįor higher throughput, configure multiple Kafka sources to read from the same topic. The following Flume configuration example uses a Kafka source to send data to an HDFS sink: The Kafka source can be combined with any Flume sink, making it easy to write Kafka data to HDFS, HBase, and Solr. Use the Kafka source to stream data in Kafka topics to Hadoop.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed